Logo sketches: Shard

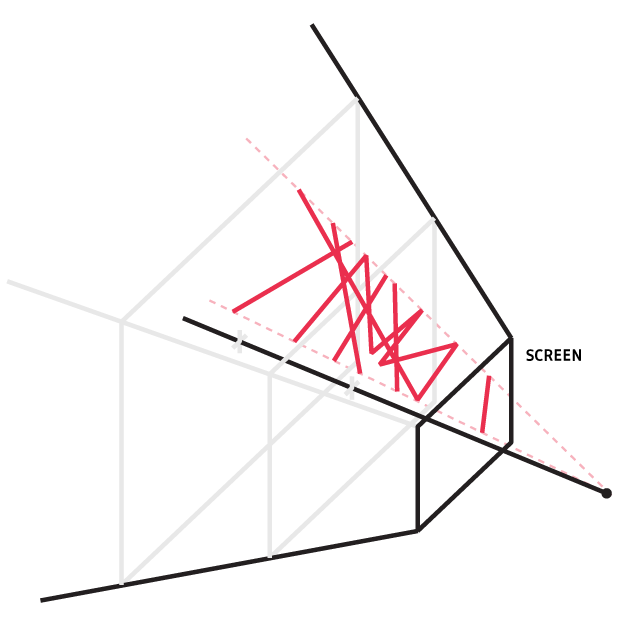

My logo already uses forced perspective to create an illusion, so why not really force it a little harder? Shard splits polygons at random vertices and carefully hurls them in front of a camera where they appear like the original. That is... until you put a spin on them!

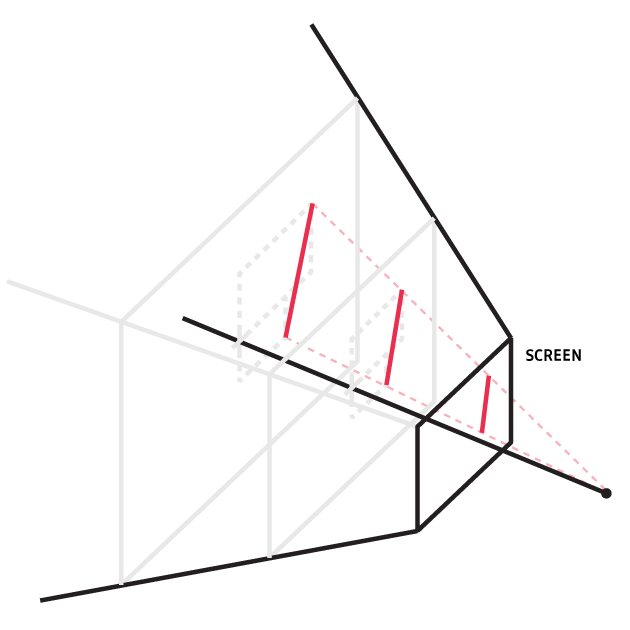

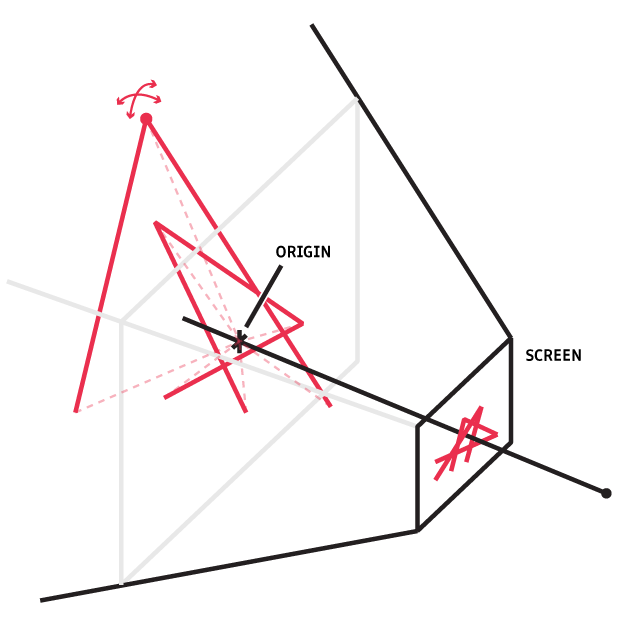

Randomizing the z dimension without changing the image is pretty simple at its core. In the orthographic case, the projection just grabs a ray from a point in the scene, through the screen, to the "focal point" of the camera, and draws it where it hit the screen. Then, any points along that ray will map to the same screen point, so moving a geometry's vertices along these rays won't change the image. Note that the vertices can be moved independently and it's totally still okay:

Despite the simplicity of its premise, there were some interesting considerations along the way. I have my favorites, which I'll discuss here:

-

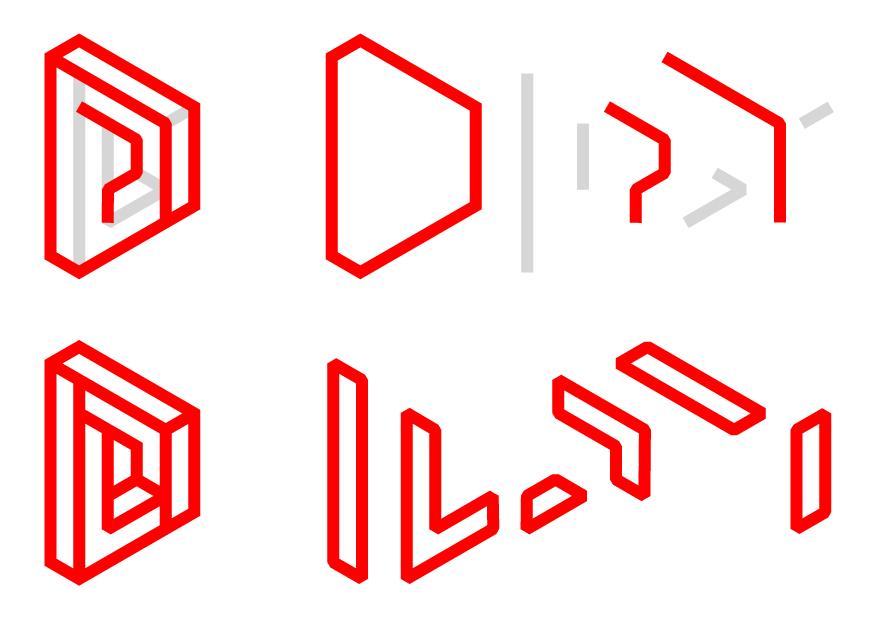

How can we generalize to sharding graphs instead of polygons? For example, this very convenient geometry I'll scoop up here has many non-overlapping polygon/polyline decompositions:

Note in the above: the shapes highlighted in red can't be formed by decompositions of the shapes in the other. Therefore, when we make up a geometry like that with polygons and polylines — whatever can be with a Pen tool — we have to be opinionated.

-

Is a 3D engine overkill?

Graph partitioning

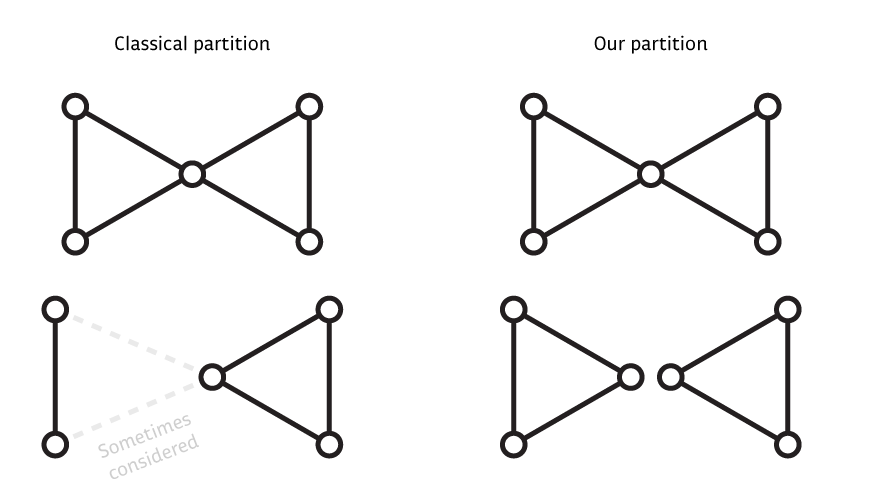

Great, we want to break our graphs at the joints, reassemble the tattered limbs and puppet them around! Hold on... our macabre aspirations our dependent on our ability to actually reassemble our graph's corpse. 2D Canvas primitives can only draw open and closed paths, which critically are graphs of max degree 2. We're in the territory of a constrained graph partitioning problem, which if we weren't careful could suddenly get very NP-hard. Canonically, we might demand that our polygons be of similar size for example. However, the crucial difference is optimization: for this purpose we actually want to be as unopinionated as possible so long as the resulting partition is drawable with polylines. We can actually be greedy!

Let's specify a bit more. Worth noting that this is not a graph partition as it's often defined in terms of vertices, because two daughter paths will both receive the vertices that their edges share.

The difference however is just a bit of extra housekeeping on cut edges, since the whole point is to keep them in the projection!

- Suppose we go a vertex and try to pair incident edges at random and terminate others. Let $n$ be the degree of this vertex of ours. We can produce every unique possibility locally pretty easily:

- We can choose a random number of pairs $p$ uniformly from 0 to $\lfloor n/2 \rfloor$

- We then shuffle the edge list and pair the first $p$ edges with the next $p$

- The remaining $n-2p$ edges mark the end (or start, if you're an optimist!) of their respective paths at our vertex.

- Note that since we don't delete or reroute the edges, the decisions at every vertex are totally independent! So long as we maintain the adjacencies we're creating in the daughters paths, we can iterate through the vertices exactly once and finish this in $\mathcal{O}(VE)$. Not shabby.

There is a caveat, since different numbers of pairs we choose have differing amounts of redundancy. Reveal the aside to see the derivation of an approximate proper weighting.

The unnormalized weight of each number of pairs $p$:

$$ W_p \approx \begin{cases} \exp\left[n\ln\left(\frac{n}{n-2p}\right) + p\left(1 + \ln\left(\frac{n-2p}{2p}\right)\right)\right] & 0 \le p < \lfloor\frac{n}{2}\rfloor\\ \exp(n-p)\frac{n-1}{2(p-1)} & p = \lfloor\frac{n}{2}\rfloor \end{cases} $$

Cooking up a camera

| V1, with THREE.js and default pixel density | V2, with DIY camera in 2D canvas and built-in pixel density |

Since this project is just drawing lines, it seemed a bit silly to invoke a full 3D graphics pipeline really just to do the math of the camera projection. On the left in the above figure is the first attempt using THREE.js that ran my site for a while. Three.JS minified still weighs 430KB, and added with the curiosity about performance of an opinionated rendering engine, I implemented my own which runs currently on the site.

- The camera sits at a fixed position offset along the z axis facing the origin.

- The camera uses a raytracer on only vertices to project the endpoints of edges onto the screen.

- Moving the mouse updates a global target rotation. A global rotation state tracks the target with a P controller and rotates all the objects in the world around the origin. (See figure below)

- GitHub